FreeSync 2 in Action: How Good Is It (Right Now)?

FreeSync two is AMD's monitor technology for the adjacent generation of HDR gaming displays. In a previous article we explained everything you need to know about it and now we'll be giving our impressions of really using 1 of these monitors for some gaming, and whether it's worth buying a FreeSync 2 monitor right now.

The monitor I've been using to test FreeSync ii is the Samsung C49HG90, a stupidly wide 49-inch double 1080p display with a total resolution of 3840 x 1080. It'south got a 1800R bend, uses VA technology, and it'due south certified for DisplayHDR 600. This means information technology sports up to 600 nits of acme effulgence, covers at least 90% of the DCI-P3 gamut, and has basic local dimming.

Now while this panel doesn't support the full DisplayHDR 1000 with 1000 nits of peak brightness for optimal HDR, the Samsung CHG90 does provide more than just an entry-level HDR experience.

There are plenty of supposedly HDR capable panels that cannot push their effulgence above 400 nits and do not back up a wider-than-sRGB gamut, but Samsung's latest Quantum Dot monitors practice provide college brightness and a wider gamut than basic SDR displays.

Despite being advertised on their website, the Samsung CHG90 does not support FreeSync 2 out of the box, requiring to download and install a firmware update for the monitor, which isn't a great experience. As explained before, AMD announced FreeSync 2 at the beginning of 2022 but this is the showtime generation of products actually supporting the applied science.

There volition likely exist many cases where people purchase this monitor, hook it upward to their PC without performing whatever firmware updates, and just presume FreeSync ii is working as intended. The fact you might demand to upgrade the firmware is not well advertised on Samsung's website – it'due south hidden in a footnote at the lesser of the page – and upgrading a monitor'southward firmware isn't exactly a common practice.

If you lot purchase a supported Samsung Quantum Dot monitor, make sure it's running the latest firmware that introduces FreeSync 2 support; if information technology is running the right firmware, the Information tab in the on-screen display volition show a FreeSync two logo.

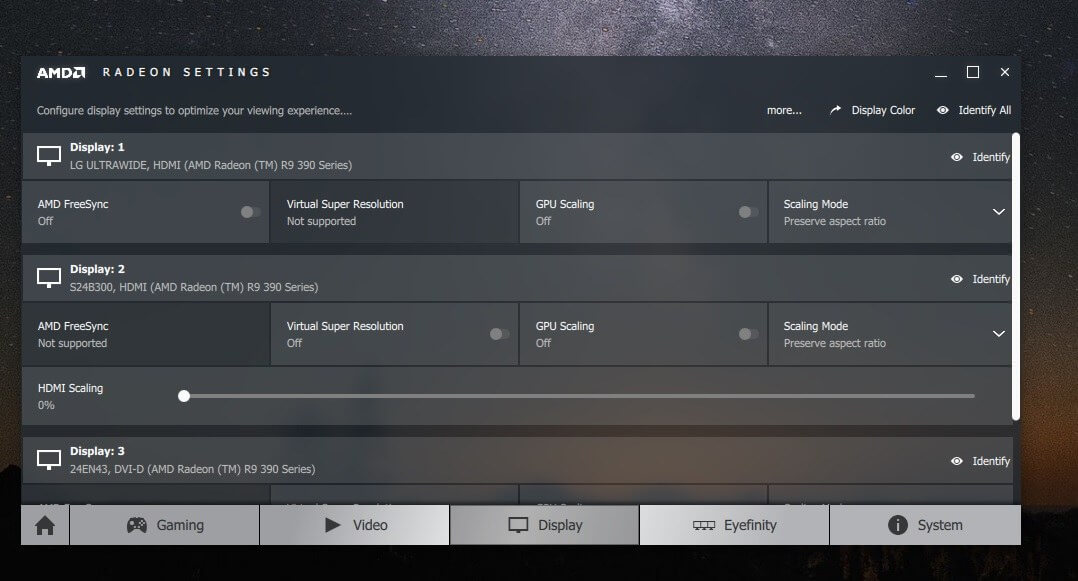

AMD's graphics driver and software utility exacerbate this event with FreeSync 2 display firmware. While Radeon Settings does indicate when your GPU is hooked up to a FreeSync display, it does not distinguish between FreeSync and FreeSync 2. There is no manner to tell within Radeon Settings or any part of Windows that your arrangement is attached to a FreeSync 2 display, and so at that place'due south no style to check if FreeSync 2 is working, whether your monitor supports FreeSync 2, or if it is enabled.

There was no change to the way Radeon Settings indicated FreeSync support afterwards nosotros upgraded our monitor to the FreeSync ii supporting firmware either. This is bound to confuse users and does need attending from AMD...

So how does FreeSync ii really work and how practice you set it up?

Provided FreeSync is enabled in Radeon Settings and in the monitor'south on-screen display – and both are enabled by default – it should exist set up to go. There is no magic toggle to get everything working and no real configuration options, instead the primal features are either permanently enabled, similar low latency and low framerate compensation, or set to be used when required, like HDR.

Using the HDR capabilities of FreeSync ii does require you to enable HDR when you want to use information technology. In the case of Windows ten desktop applications, this ways going into the Settings menu, heading to the display settings, and enabling "HDR and WCG". This switches the Windows desktop surroundings to an HDR environment, and any apps that support HDR can pass their HDR-mapped information straight to the monitor through HDR10. For standard SDR apps, which currently are nearly Windows apps, Windows 10 attempts to tone map the SDR colors and brightness to HDR every bit it can't automatically switch modes on the wing.

While Windows 10 has been improving its HDR support with each major Windows update, information technology's notwithstanding non at a signal where SDR is mapped correctly to HDR. With HDR and WCG enabled, SDR apps look washed out and brightness is lacking. Some apps like Chrome are straight upward broken in the HDR mode. There is a slider inside Windows for changing the base brightness for SDR content, yet with our Samsung test monitor, the maximum supported brightness for SDR in this fashion is effectually 190 nits, which is well beneath the monitor's maximum 350 nits when HDR and WCG are disabled.

Now, 190 nits of brightness is probably fine for a lot of users, but it's a bit strange that the slider does non correspond to the full effulgence range of the monitor. It'south besides a different control to the display's on-screen brightness control; if the monitor's brightness is set to less than 100, you lot'll get less than 190 nits when displaying SDR content.

If this all sounds confusing to you, that's because it is. In fact, the whole Windows desktop HDR implementation is a bit of a mess, and if you can believe information technology, earlier versions of Windows x were even worse.

This is the case with not merely FreeSync 2 monitors, but all HDR displays hooked upwardly to Windows 10 PCs. At the moment, our communication is to disable HDR and WCG when using the Windows x desktop and only enable information technology when you desire to run an HDR app, because that manner you'll get the all-time SDR feel in the vast majority of apps that currently don't support HDR.

So how nearly games? Surely, this is the area where FreeSync two monitors and HDR actually shines, right? Well… information technology depends. We tried a range of games that currently support HDR on Windows, and nosotros were largely disappointed with the results. HDR implementations differ from game to game, and it seems that correct now, a lot of game developers have no idea how to correctly tone map their games for HDR.

The worst of the lot are EA'south games. Mass Effect Andromeda'southward HDR implementation was famously shamed when HDR monitors were offset shown off, only the curse of poor HDR continues into Battlefield i and the newer Star Wars Battlefront 2. Both games exhibit washed out colors in the HDR manner that looks far worse than the SDR presentation, compounded by a general night tone to the prototype, and weak employ of HDR's spectacular bright highlights. In all iii of EA's newer games that support HDR, at that place is no reason whatever to enable it, as it looks so much ameliorate in SDR.

We're non sure why these games look so bad, because reports seem to suggest EA titles besides look bad on TVs with better HDR support, and on consoles. Nosotros think there is something fundamentally cleaved with the way EA's Frostbite engine manages HDR and hopefully it can exist resolved for upcoming games.

Hitman is one of the older games to support HDR, and information technology too does non manage HDR well. While the presentation isn't equally washed out as with EA'due south titles, colors are withal dull and the image in general is also nighttime, with little (if any) use of impressive highlights. The idea of HDR is to add to the colour gamut and increase the range of brightnesses used, simply in Hitman information technology just seems like everything has become darker and less intense. Again, this is a game that you should play in the SDR mode.

Assassinator'due south Creed Origins has an interesting HDR implementation as information technology allows y'all to modify the brightness ranges to match the exact specifications of your display. We're torn as to whether the game looks better in the HDR or SDR modes; HDR appears to have better highlights and a wider range of colors during the day, but suffers from a strange lack of depth at night which oddly makes night scenes feel less like they are actually at nighttime. The SDR way looks better during these night periods and is perhaps slightly behind the HDR presentation during the twenty-four hour period.

On a display with full assortment local dimming, which this Samsung monitor does not have, Assassin'southward Creed Origins would expect better but it's not the best HDR implementation we've seen in any case.

The best game for HDR past far is Far Cry 5. AMD tells me this is the first game that will support FreeSync two'southward GPU-side tone mapping in the coming weeks, although correct now the game does not support this HDR pipeline. Instead, as with most HDR games, Far Cry 5 uses HDR10 that is passed on to the display for further tone mapping.

Unlike most other games, though, Far Cry 5's HDR10 is actually quite good. The color gamut is clearly expanded to produce more vivid colors, and nosotros're not getting the same done out expect as many other HDR titles. Bright highlights truly are brighter in the HDR mode with neat dynamic range, and in general this is one of the few titles that looks substantially better in the HDR mode. Overnice work Ubisoft.

Middle-world: Shadow of War is some other game with a decent HDR implementation. When HDR is enabled, this game utilizes a noticeably wider range of colors and highlights are brighter. Again, there is no effect with dull colors or a washed out presentation, which allows the HDR style to improve on the SDR presentation in basically every way.

How you enable HDR in these games isn't always the same. Most of the titles have a built-in HDR switch that overrides the Windows' HDR and WCG setting, and then you can leave the desktop as SDR and merely enable HDR in the games y'all want to play in the HDR style.

Hitman is an interesting example where information technology has an HDR switch in the game settings but volition display a blackness screen if the Windows HDR switch is also enabled. Shadow of War has no HDR switch at all, instead deferring to the Windows HDR and WCG setting, which is annoying as you'll take to switch between HDR and SDR manually to go the optimal HDR experience in the game, but a decent SDR feel on the desktop.

While the HDR experience in a lot of games right now is pretty bad and actually a lot worse than the basic SDR presentation, we remember there is reason to be optimistic about the time to come of HDR gaming on PC. Some more than recent games like Far Weep v and Shadow of War have pretty decent HDR implementations which ameliorate upon the SDR way in noticeable means, while many of the games that have poor HDR implementations are somewhat older.

Equally the HDR ecosystem matures, we should see more than Far Cry 5s and fewer Mass Upshot Andromedas in terms of their HDR implementation.

We're likewise non at the stage where whatever games apply FreeSync 2'due south GPU-side tone mapping. Every bit we mentioned before, Far Cry v volition exist the showtime to do so in the coming weeks, with AMD challenge more games scheduled for release afterward this year will include FreeSync 2 support right out of the box.

It'll be interesting to come across how GPU-side tone mapping turns out, merely it definitely has the scope to amend the HDR implementation for PC games.

However, every bit it stands right now, we see little reason to buy a FreeSync 2 monitor until more games include decent HDR. It's just as well hit or miss – and mostly misses – to be worth the meaning investment into a showtime-generation FreeSync 2 HDR monitor. This isn't the sort of technology to go an early on adopter in at the moment, every bit later in the year nosotros should take a wider range of HDR monitors to cull from, potentially with improve support for HDR through higher brightness levels, full array local dimming, and wider gamuts. By then we should also have a better wait at the HDR game ecosystem, hopefully with more games that sport decent HDR implementations.

That's non to say yous should avert these Samsung Quantum Dot FreeSync 2 monitors, in fact they're pretty adept as far as gaming monitors become. Just don't buy them specifically for their HDR capabilities or y'all might find yourself a bit disappointed right at present.

Source: https://www.techspot.com/review/1633-freesync-2-hdr-impressions/

Posted by: hughesprieture.blogspot.com

0 Response to "FreeSync 2 in Action: How Good Is It (Right Now)?"

Post a Comment